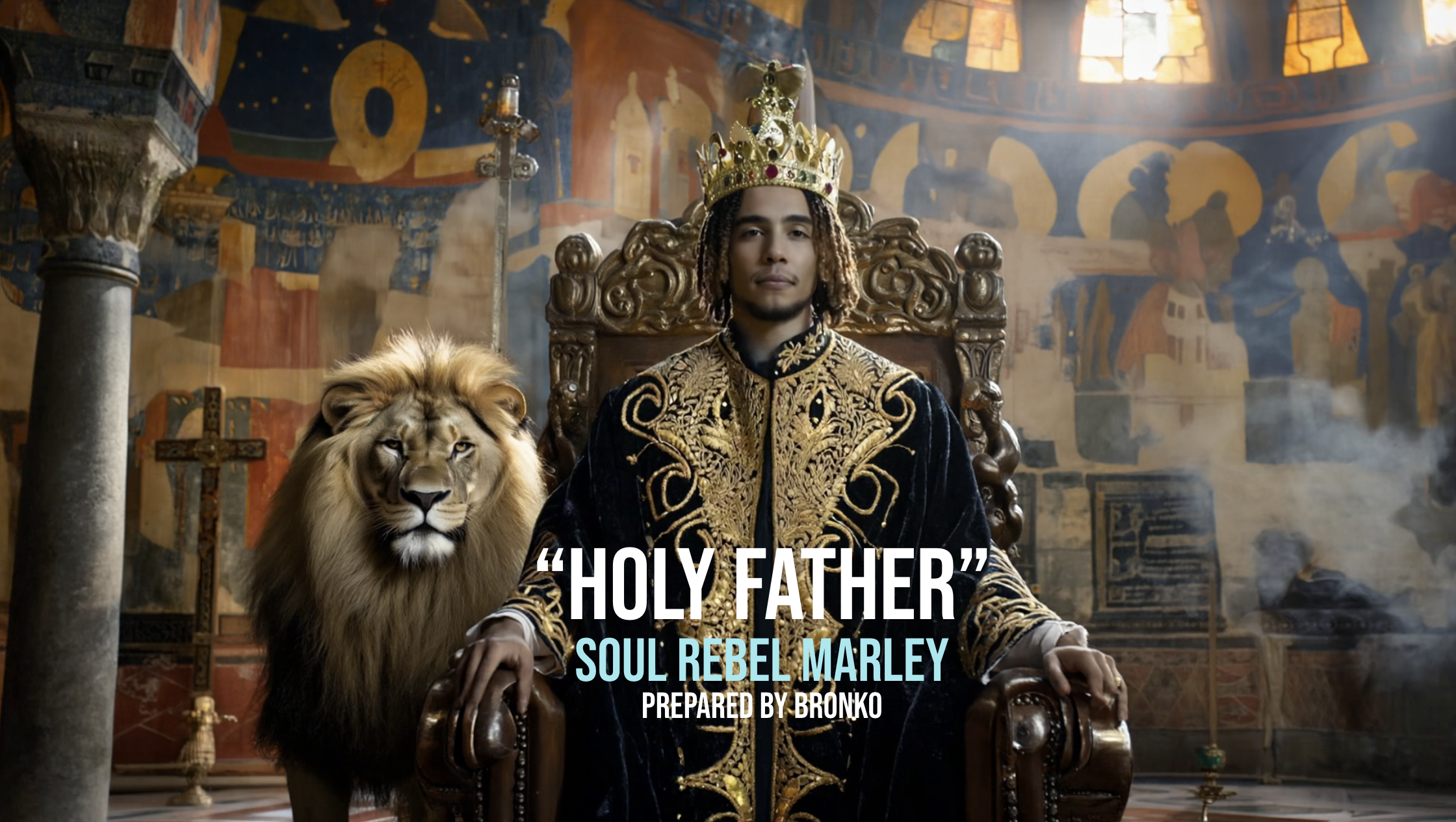

SOUL REBEL MARLEY - “HOLY FATHER”

CATEGORY: MUSIC VIDEO

CLIENT: TUFF GONG INTERNATIONAL

PROJECT: HOLY FATHER

POSITION: PRODUCER/DIRECTOR

How do you film a spiritual pilgrimage to Ethiopia's holiest sites when you can't get your crew to Ethiopia?

PROJECT OVERVIEW

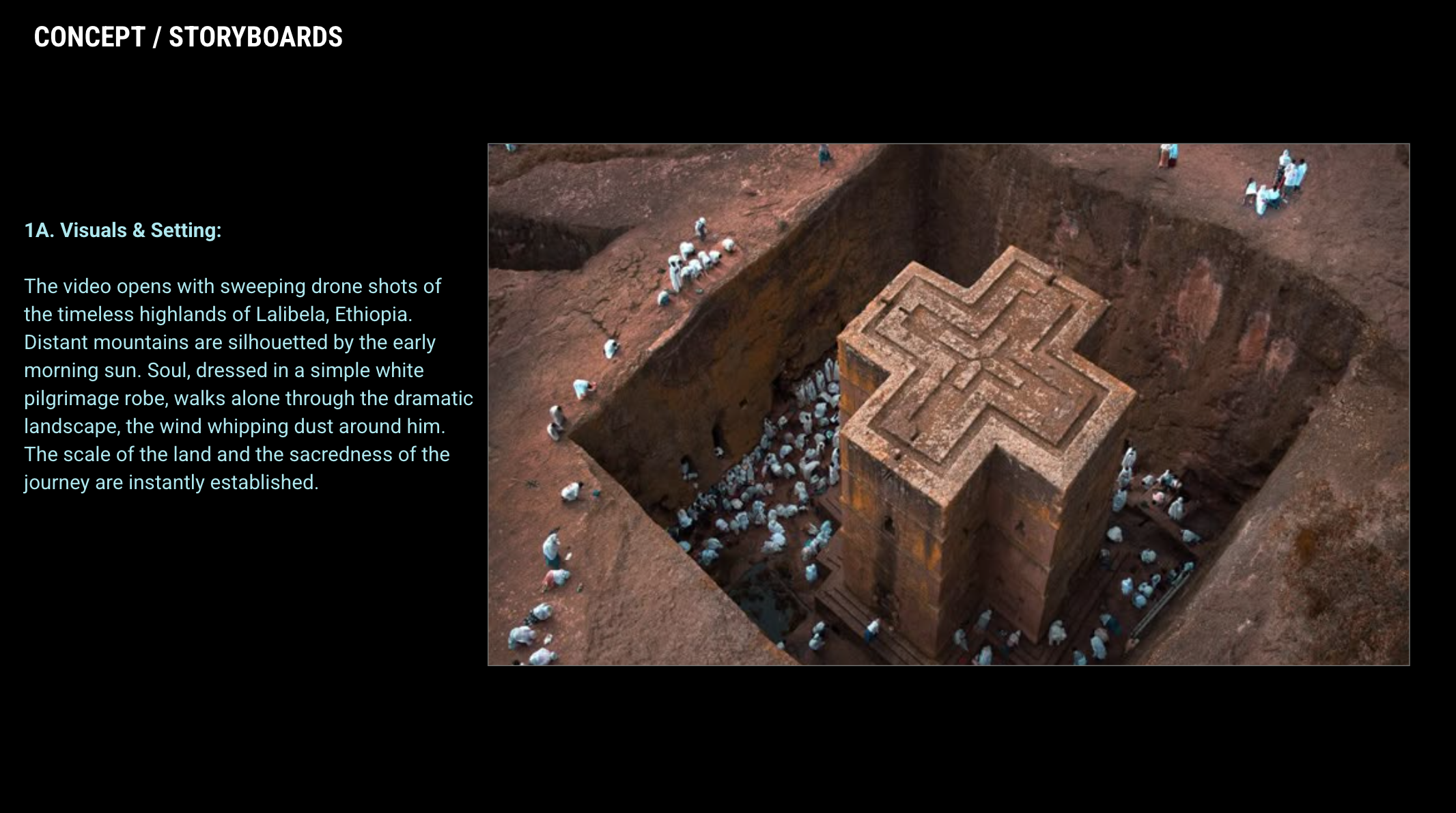

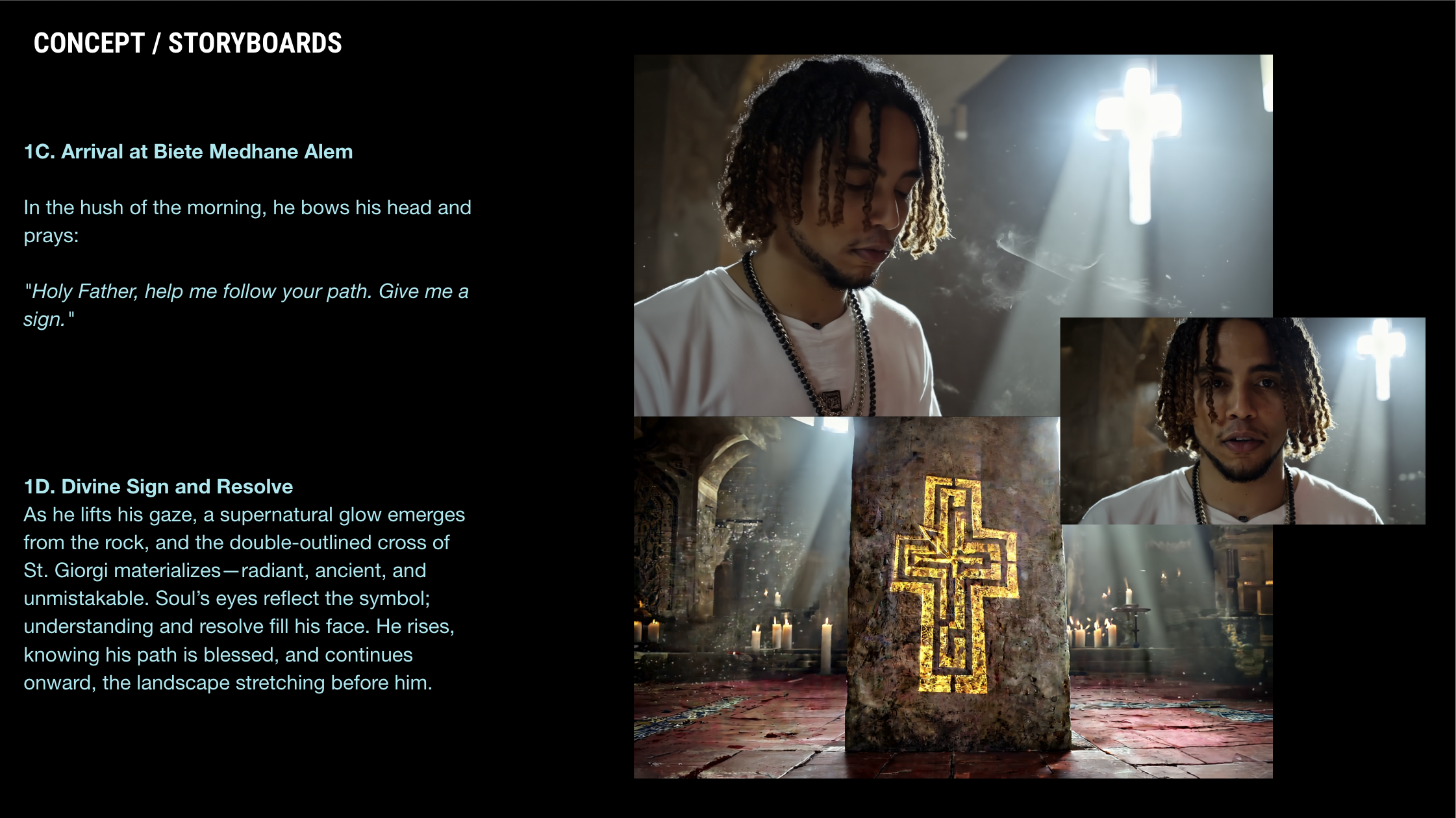

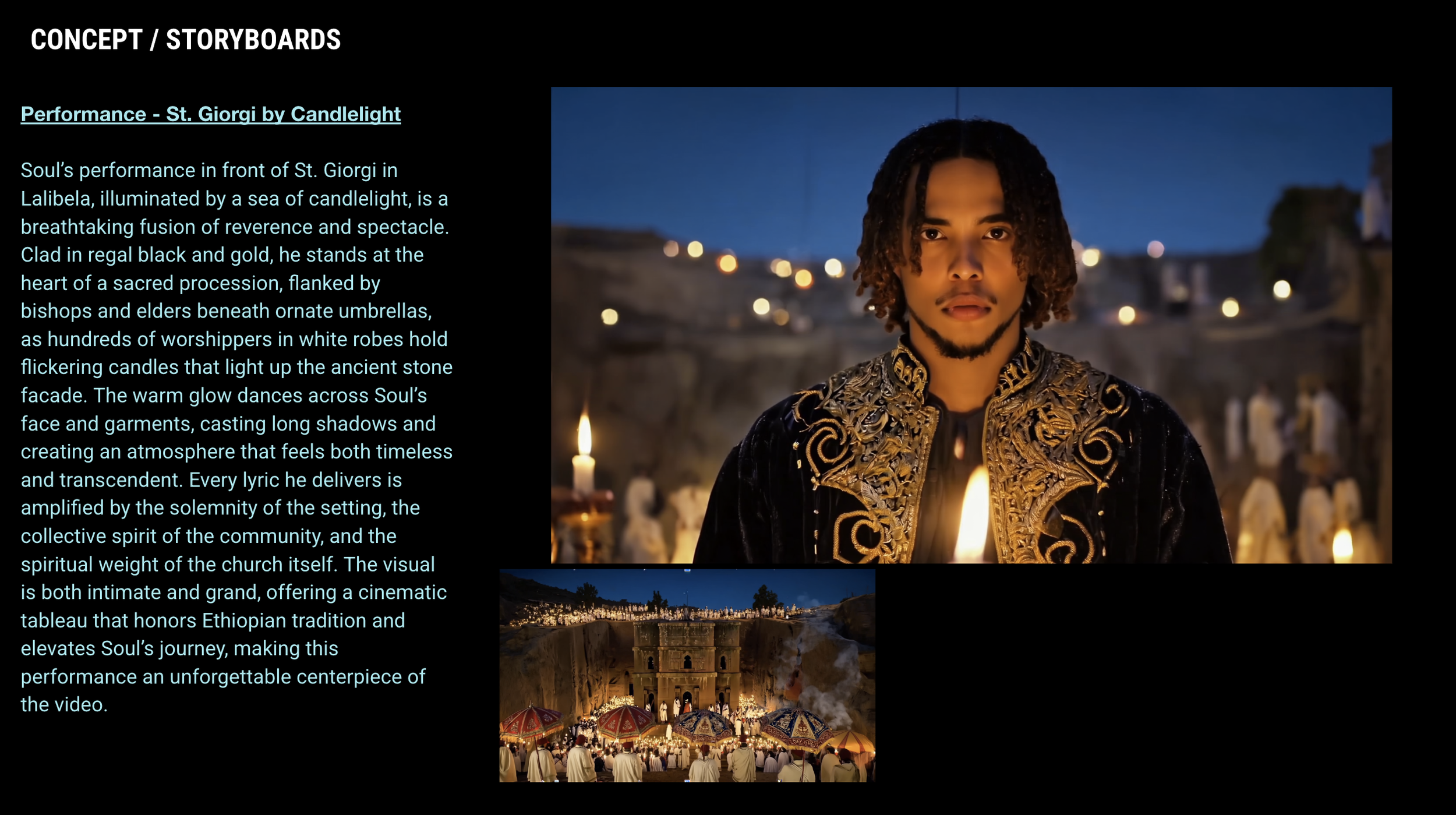

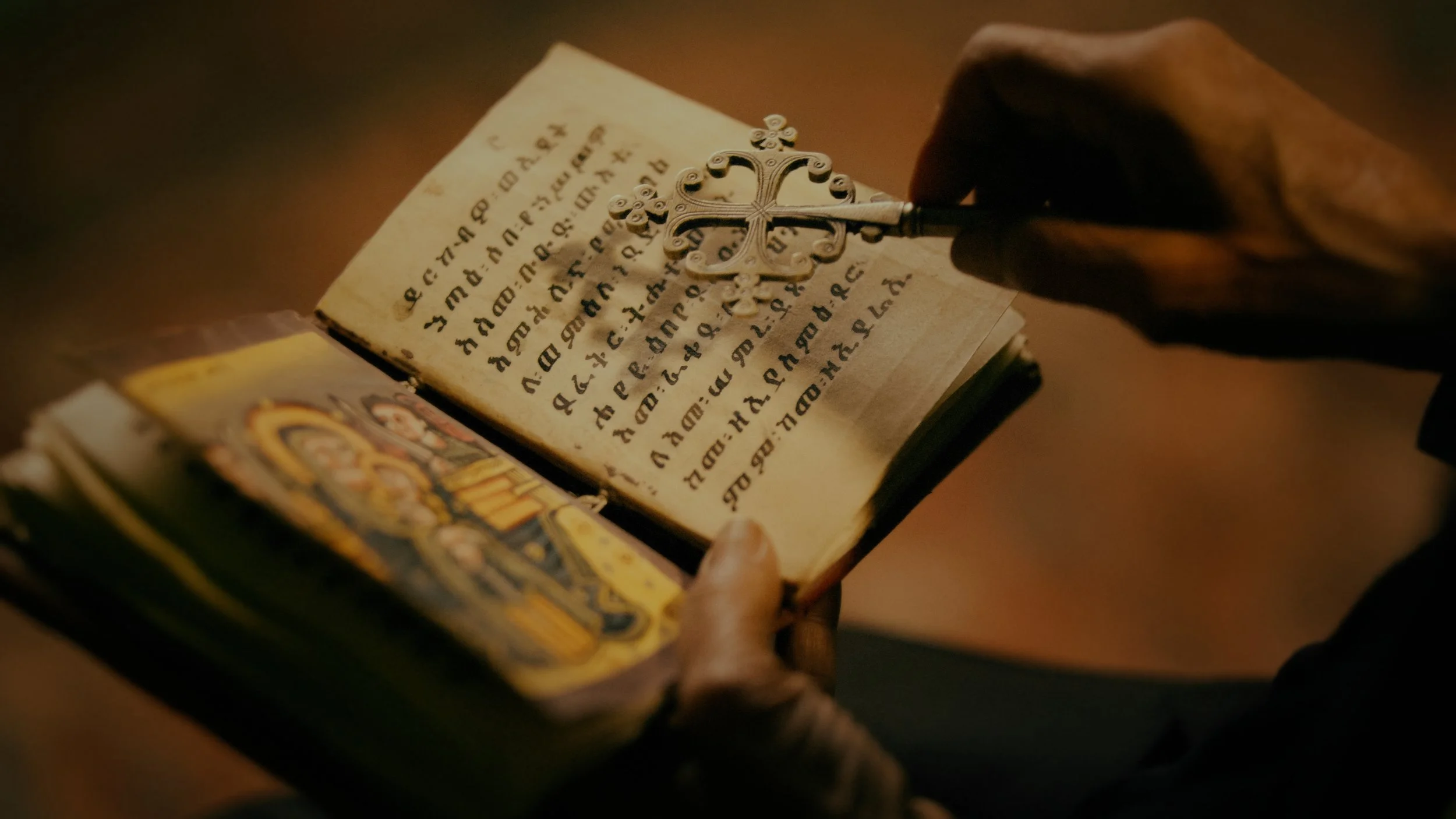

When Tuff Gong International approached us with Soul-Rebel Marley's "Holy Father," they faced a formidable creative challenge: their original vision—a spiritual pilgrimage filmed on location at the ancient rock-hewn churches of Lalibela, Ethiopia—had stalled due to budget constraints and filming restrictions from the Ethiopian government and church elders. With only B-roll footage and a compressed 2-month timeline, the project seemed destined to compromise its ambitious vision.

Instead of scaling back, we proposed a bold solution: combine LED wall technology with AI-generated environments to create broadcast-quality representations of Ethiopia's sacred landscapes—all shot in a Glendale studio. Using Google Veo 2, Kling 3.0, Runway ML, and Midjourney, we generated over 100 styleframes, built immersive volumetric environments, and delivered a cinematic music video that premiered on YouTube (100K+ views), aired on Jamaican broadcast television, and was displayed on OOH billboards across Kingston—proving AI-native production could achieve what traditional methods couldn't afford.

PROJECT CREDITS

Director/Producer: Martyn Watts (BRONKO)

Artist: Soul-Rebel Marley

Label: Tuff Gong International

Producer: Joseph I

Director of Photography: Jacob Caron

Production Designer: Jasmine Nicholes

Wardrobe: Joseph I

Colorist: Martyn Watts

AI Workflow Design: Martyn Watts